In 2026, Prompt Engineering is no longer a “hack“; it has evolved into the core discipline for programming how enterprise-grade autonomous systems think, reason, and execute. We have moved from manipulating words to architecting machine intelligence.

The Transition: From Manual Crafting to Cognitive Systems Engineering

Until recently, interacting with Large Language Models (LLMs) felt like an imprecise craft. We experimented with phrasing, hoping for an optimal result. Today, that era is behind us.

Modern Prompt Engineering is not about writing questions in a chat window; it is about designing the processing logic of an AI. It is the critical bridge that transforms ambiguous human intent into precise, scalable, and reliable computational execution.

If traditional code manages data, Prompt Engineering manages reasoning flows.

Cognitive Architectures: Structuring Machine Thought

For an AI Agent to handle complex tasks without “hallucinating,” we need more than a simple instruction. We need Reasoning Frameworks that force the model to validate its own logic:

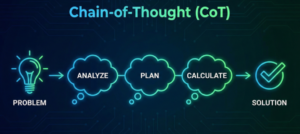

- Chain-of-Thought (CoT): Breaking problems into intermediate logical steps to reduce reasoning errors.

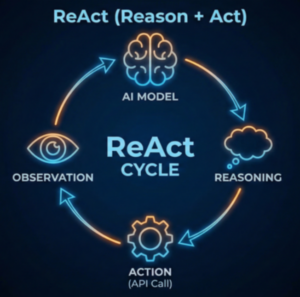

- ReAct (Reason + Act): A closed-loop where the model reasons, executes a real-world action (API, Database query), and observes the result before continuing.

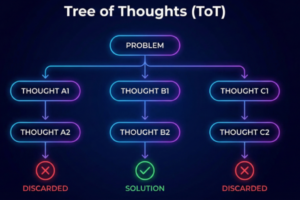

- Tree of Thoughts (ToT): For high-stakes problem solving, where the model explores multiple solution branches simultaneously, discarding dead ends to find the optimal path.

The Revolution of “Programmatic Prompting” (DSPy)

If manual prompting was the “hardcoding” era of AI, Programmatic Prompting is its CI/CD revolution. Frameworks like DSPy have changed the game by treating prompts as optimizable software parameters.

Instead of humans spending hours tweaking adjectives, we define a Signature (inputs and expected outputs) and use teleprompters—optimization algorithms—to “compile” the most effective instructions. This shifts Prompt Engineering from a subjective task into a reproducible data science pipeline.

The Enterprise AI Stack: Evaluation, Security, and Grounding

A production-ready agent requires more than just a clever prompt; it requires a system of governance:

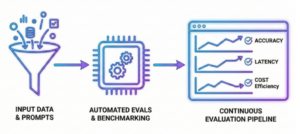

- Continuous Evaluation (Evals): In 2026, a prompt is only as good as its benchmark. We use automated evaluation pipelines to measure accuracy, latency, and cost before any deployment.

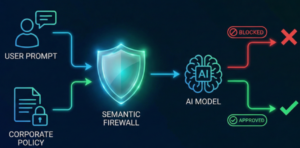

- Guardrails & Governance: We implement semantic firewalls that enforce corporate policies, ensuring agents stay within legal and ethical boundaries while preventing prompt injection.

- RAG & Tool Use: The cognitive architecture is grounded in enterprise reality through Retrieval-Augmented Generation, ensuring the agent’s reasoning is based on your private data, not just general training.

From Single Agents to Multi-Agent Orchestration

The ultimate frontier is no longer the “lonely agent.” We are now designing Orchestration Protocols where specialized agents, each with its own specialized prompt architecture, collaborate. One agent plans, another executes, and a third audits the work, creating a self-correcting ecosystem that minimizes human intervention.

Fusion Case Study: Prompt Engineering in Action with Copilot Studio

In enterprise environments, prompt engineering doesn’t end with crafting clever instructions. At Fusion IT, we use Microsoft Copilot Studio as a true orchestrator: connecting prompts, actions, governance, and corporate knowledge into a production-ready agent system.

Copilot Studio is more than a UI layer; we use it as an execution engine that interprets and runs engineered prompts through three core practices:

- Continuous Evaluation: We run automated pipelines that benchmark each prompt flow for accuracy, latency, and cost-efficiency before it goes live.

- Semantic Guardrails: Our implementations include safeguards against prompt injection and unauthorized data access, enforcing compliance with privacy and security standards.

- Actionable Integration: We bridge SharePoint knowledge bases with external APIs through Power Automate, enabling dynamic, policy-aware actions triggered by user intent.

From Chat to Enterprise Action

One successful deployment involved moving beyond passive FAQ bots to create an AI agent capable of making decisions and acting. We structured prompts as a decision tree that allowed Copilot Studio to determine whether to fetch data from SharePoint or call an external API, depending on the request.

This structure ensures every action is grounded in both static corporate knowledge and real-time transactional data. The result: faster resolutions, smarter agents, and AI well implemented to get things done.

Conclusion

In 2026, Prompt Engineering is the new high-level programming language. It is not about talking to machines; it is about teaching them to think within the parameters your business requires. The companies leading the next decade are those that don’t just “use AI,” but those that own and control their Cognitive Architecture.

Ready to turn prompts into real outcomes? Let’s build your enterprise agent architecture together